Some Cool Title

A charging station was considered for the robot in this project, which includes electrical charging and fresh water tank charging, as well as dirty water discharge. The robot’s navigation system utilizes Sensor Fusion, combining a high-precision LiDAR with a 3D Depth Camera. While the LiDAR is responsible for long-range mapping and identifying structural boundaries like walls, the 3D Depth Camera provides a wide-angle 3D view to detect low-profile or moving obstacles that may fall outside the LiDAR’s horizontal scanning plane. This integrated approach ensures safe and intelligent autonomous operation in complex, dynamic indoor environments.

Due to space and weight constraints in this project, a mini-case (mini PC) with the following specifications was used for the central processor of these robots.

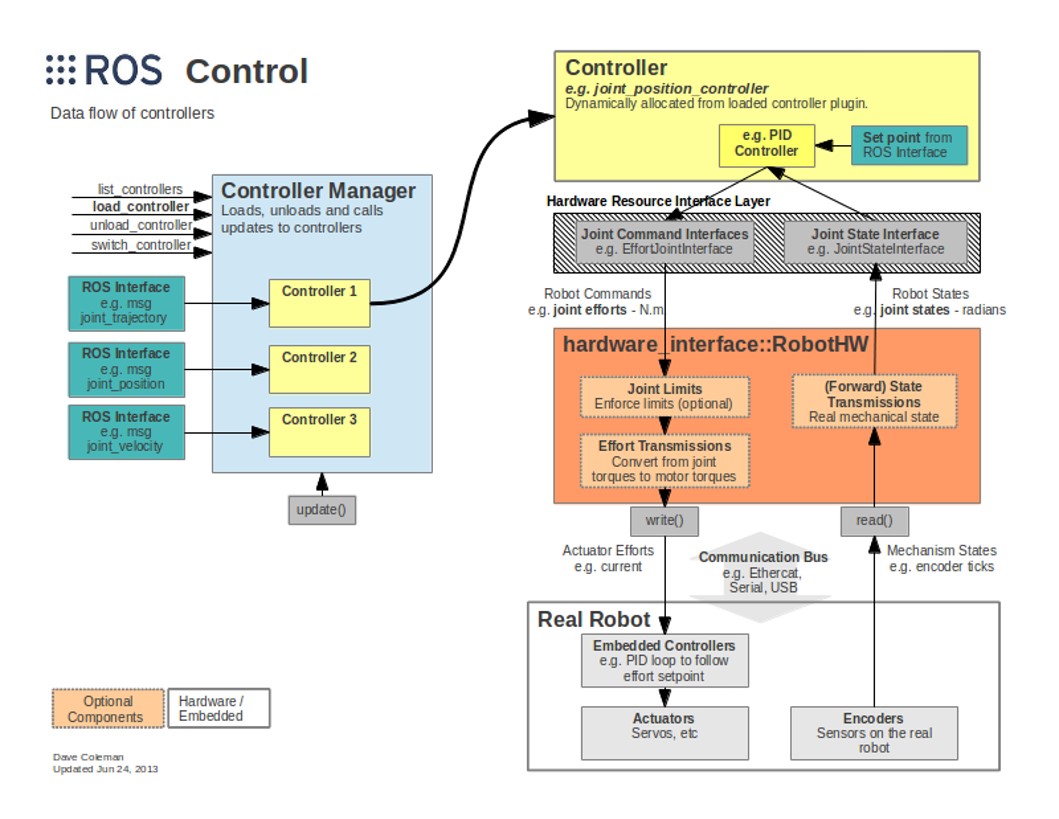

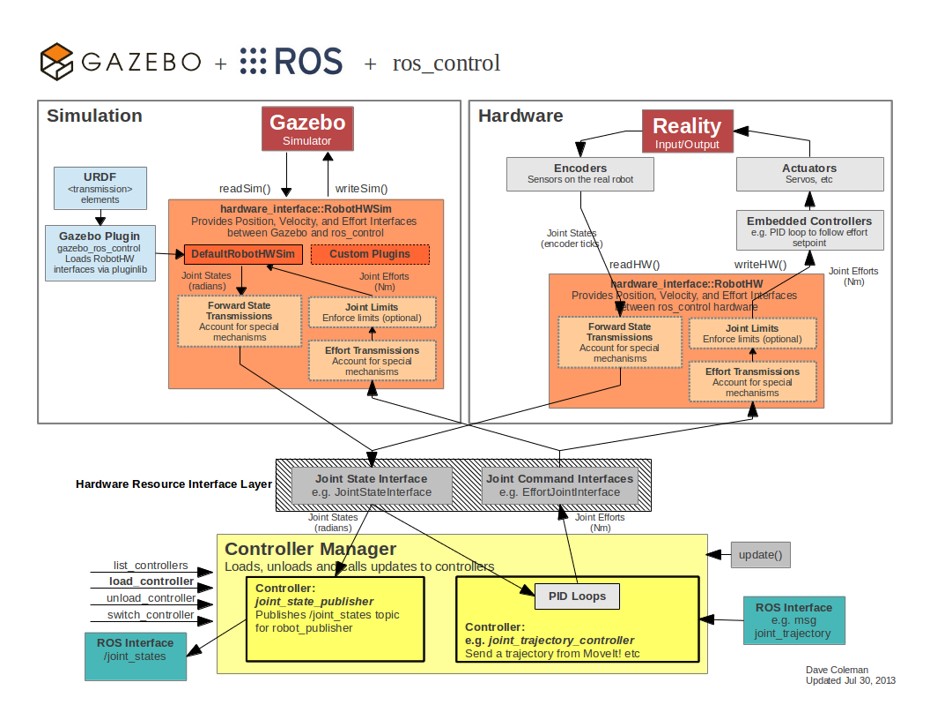

The below image shows a schematic of the structure and data flow in the ROS Control system for a Scrubber Cleaning Robot. In this system, the Controller Manager module is responsible for loading, switching, and updating the controllers. Each controller, such as the Navigation and Path Following Controller or the Brush Rotation Intensity and Water Flow Controller, receives commands via ROS Interfaces (like messages for target path or cleaning commands).

These controllers then send the motion and operational commands to the robot's hardware through the Hardware Interface layer. At this stage, information is transferred to the physical components like the Wheel Motors, Cleaning Brush Motors, and Water Pump (Actuators) and the Sensors and Wheel Encoders (for determining position and cleaning status). The robot's status feedback (such as current location or dirty water level) is then returned to the system.

This structure allows ROS to provide precise, modular, and configurable control over the cleaning and movement functions of the scrubber robot.

In the diagrams below, the left side is the simulation environment, where the scrubber robot model runs inside Gazebo and communicates with ROS through specialized plugins. This allows testing of wheel motion, brush mechanisms, and robot behavior in different environments before working with real hardware.

On the right side is the real scrubber robot hardware, including wheel encoders, navigation sensors, brush motors, suction motors, and water-spray or pump systems. The hardware sends real sensor data to ROS and receives control commands for driving, cleaning functions, and motion updates.

Between simulation and hardware, the hardware_interface layer connects everything to ROS and ros_control, enabling the system to read joint states, send actuator commands, and manage different robot components in a unified structure. At the bottom, the Controller Manager and PID controllers handle precise control of wheel speeds, navigation, and cleaning mechanisms. This architecture ensures that the scrubber robot performs consistently and reliably in both simulation and real-world operation.

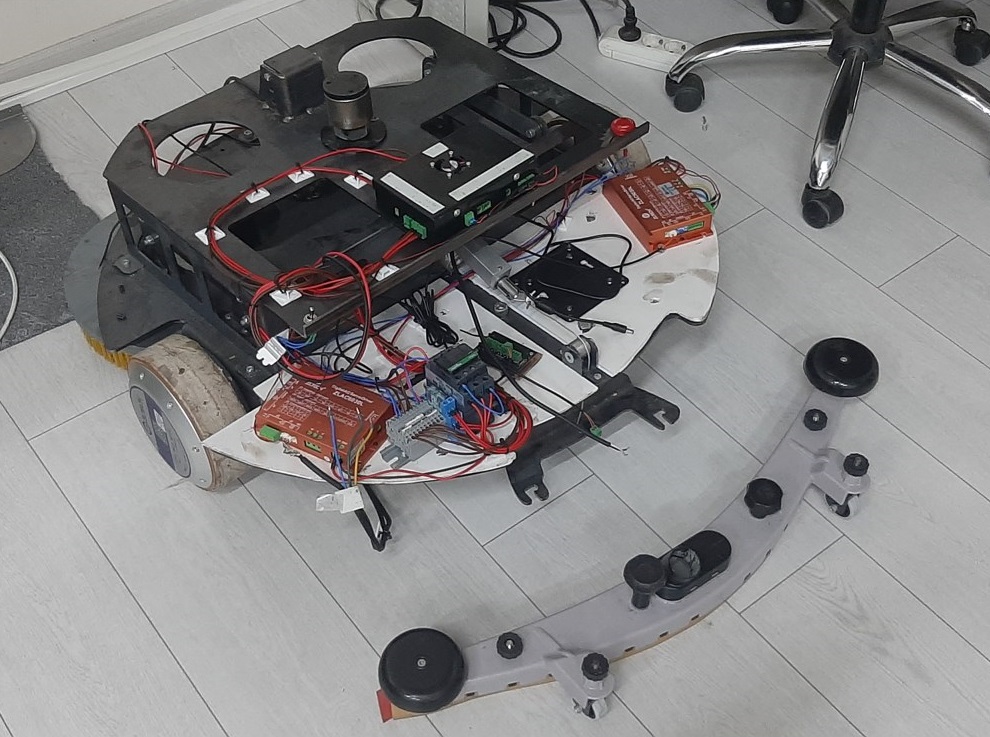

A view of the control interface systems for the second type of scrubber robot, related to the motion control of the hub motors (BLDC), is shown in the photos below.

The image below shows a 3D simulation environment in Gazebo, where an autonomous floor-cleaning scrubber robot is being tested. The robot is positioned at the center of the simulated room, and a wide blue fan-shaped field represents the robot’s LiDAR sensor coverage. This sensor continuously scans the surrounding area to detect walls, obstacles, and people.

A human model and a vending machine are placed in the environment to evaluate the robot’s obstacle-avoidance and safe navigation capabilities. The LiDAR scan is used for real-time mapping, collision prevention, and path-planning, allowing the scrubber robot to operate safely in indoor environments such as malls, warehouses, or office buildings.

This simulation setup is typically used to test autonomous behaviors (including perception, mapping, and navigation) before deploying the cleaning robot in real-world applications.

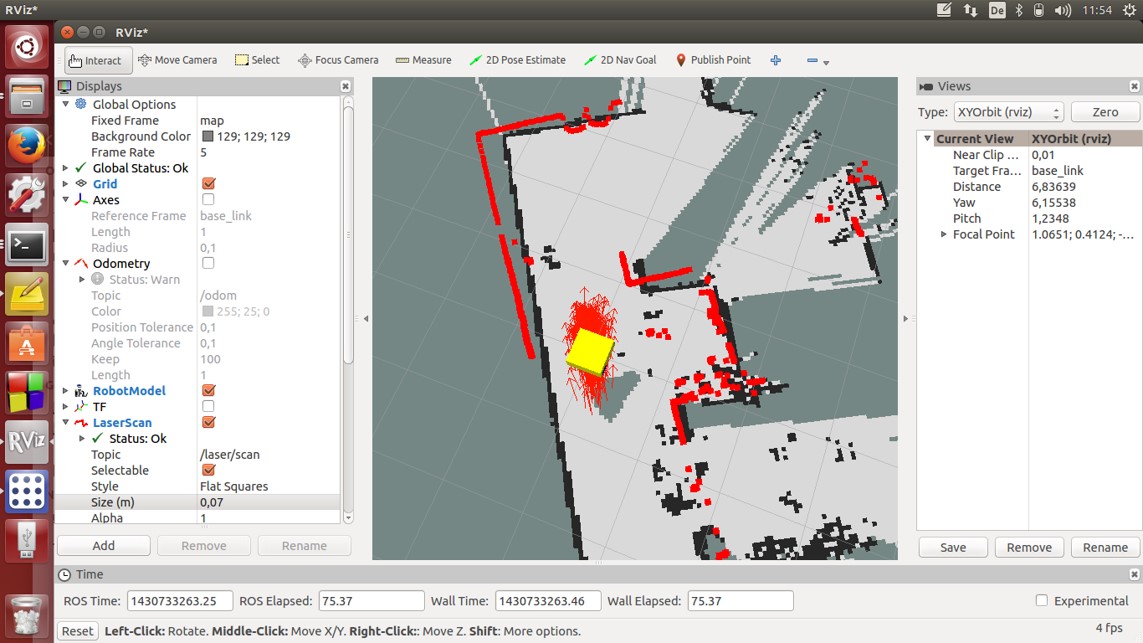

The image below shows the RViz visualization environment, a ROS-based tool built with a Qt graphical interface. RViz is used here to monitor the autonomous floor-cleaning scrubber robot during mapping and navigation tests.

The central display shows a 2D occupancy grid map generated from the robot’s LiDAR sensor. Black areas represent obstacles, white areas represent free space, and the red points show live LiDAR reflections. The yellow square marks the robot’s current position.

Qt components (such as the left “Displays” panel, the top toolbars, and configuration windows) allow the operator to interact with real-time robot data, adjust visualization settings, and control the mapping process. This Qt-based interface makes RViz flexible for debugging sensor data, validating LiDAR performance, and analyzing the robot’s navigation behavior. This setup is typically used to evaluate how the scrubber robot builds maps, perceives obstacles, and localizes itself while performing autonomous cleaning tasks in indoor environments.

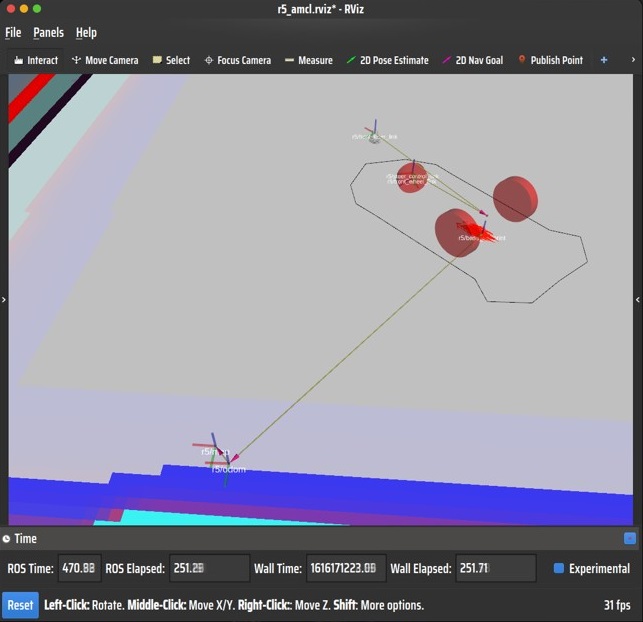

The videos below show the motion simulation of the first type of robot and the actual turning state of the first type of robot. The minimum turning radius of this robot is greater than that of the second type of robot.